Fundamentals of Apache Spark

Knowledge Hut

MAY 3, 2024

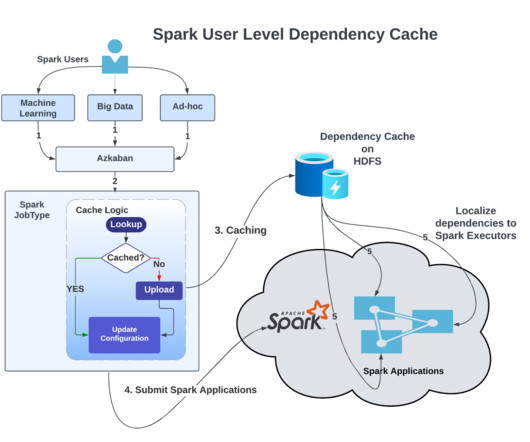

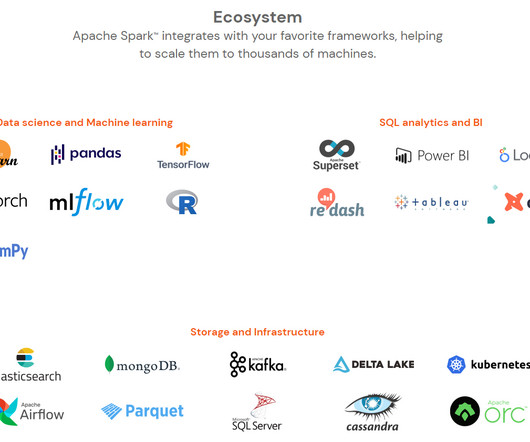

Cluster Computing: Efficient processing of data on Set of computers (Refer commodity hardware here) or distributed systems. It’s also called a Parallel Data processing Engine in a few definitions. Spark is utilized for Big data analytics and related processing. Hadoop and Spark can execute on common Resource Manager ( Ex.

Let's personalize your content