OLAP vs. OLTP: A Comparative Analysis of Data Processing Systems

KDnuggets

AUGUST 21, 2023

A comprehensive comparison between OLAP and OLTP systems, exploring their features, data models, performance needs, and use cases in data engineering.

This site uses cookies to improve your experience. By viewing our content, you are accepting the use of cookies. To help us insure we adhere to various privacy regulations, please select your country/region of residence. If you do not select a country we will assume you are from the United States. View our privacy policy and terms of use.

KDnuggets

AUGUST 21, 2023

A comprehensive comparison between OLAP and OLTP systems, exploring their features, data models, performance needs, and use cases in data engineering.

Tweag

APRIL 26, 2023

Moreover, these steps can be combined in different ways, perhaps omitting some or changing the order of others, producing different data processing pipelines tailored to a particular task at hand. The reader is assumed to be somewhat familiar with the DataKinds and TypeFamilies extensions, but we will review some peculiarities.

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

Peak Performance: Continuous Testing & Evaluation of LLM-Based Applications

From Developer Experience to Product Experience: How a Shared Focus Fuels Product Success

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

Precisely

JULY 25, 2023

Organizations that run SAP can use Excel-to-SAP automation to do more with less, while also increasing agility and improving their SAP master data management process automation. We bring automation closer to the business users who own the data and the day-to-day processes that drive the business. Check out our free ebook.

Peak Performance: Continuous Testing & Evaluation of LLM-Based Applications

From Developer Experience to Product Experience: How a Shared Focus Fuels Product Success

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

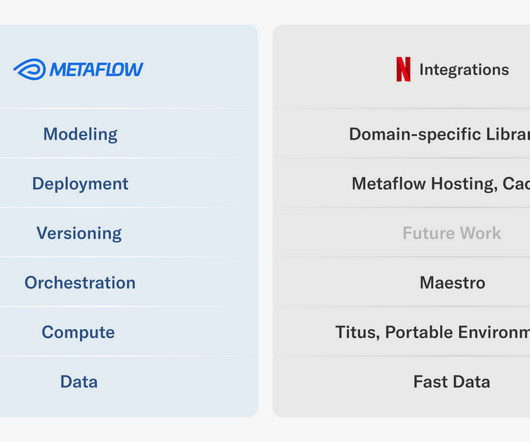

Netflix Tech

MARCH 7, 2024

The Machine Learning Platform (MLP) team at Netflix provides an entire ecosystem of tools around Metaflow , an open source machine learning infrastructure framework we started, to empower data scientists and machine learning practitioners to build and manage a variety of ML systems.

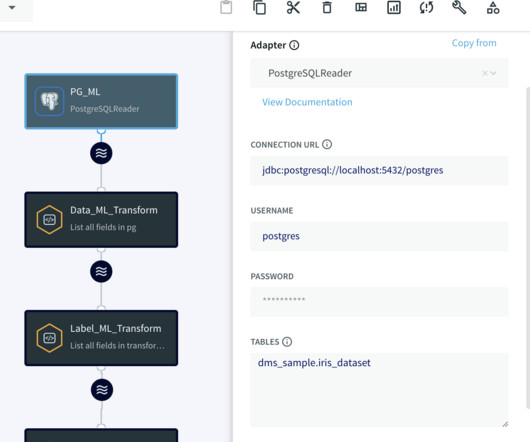

Striim

NOVEMBER 17, 2023

Striim serves as a real-time data integration platform that seamlessly and continuously moves data from diverse data sources to destinations such as cloud databases, messaging systems, and data warehouses, making it a vital component in modern data architectures.

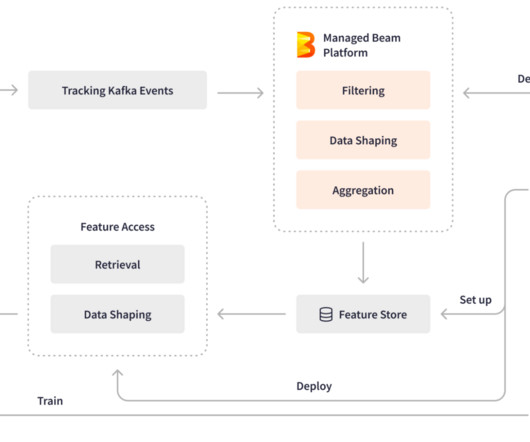

LinkedIn Engineering

OCTOBER 19, 2023

Authors: Bingfeng Xia and Xinyu Liu Background At LinkedIn, Apache Beam plays a pivotal role in stream processing infrastructures that process over 4 trillion events daily through more than 3,000 pipelines across multiple production data centers.

Snowflake

MARCH 16, 2023

“Ownership was difficult because we had replicas of the data everywhere, which meant we didn’t really know who to speak to about the different data sets. A lack of data standardization from disconnected processes also posed a potential risk for John Lewis. “We Governing it was overly onerous.”

Precisely

OCTOBER 2, 2023

Manual, error-prone SAP data processes simply don’t cut it anymore. Automating the processes that create and maintain the vast amounts of interdependent data that support your SAP ERP business processes is key to gaining agility, speed, and improved data quality and integrity. Automation.

Data Engineering Podcast

DECEMBER 31, 2018

Summary As more companies and organizations are working to gain a real-time view of their business, they are increasingly turning to stream processing technologies to fullfill that need. However, the storage requirements for continuous, unbounded streams of data are markedly different than that of batch oriented workloads.

Knowledge Hut

JANUARY 30, 2024

ITIL Processes ITIL comprises several processes that make it extremely adaptable, scalable, and diverse. These processes consist of activities with specified inputs, causes, and outputs. Let's look at some of the ITIL Processes and ideas that underpin them. This process is completed through five successive activities.

Data Engineering Podcast

APRIL 24, 2022

WhyLogs is a powerful library for flexibly instrumenting all of your data systems to understand the entire lifecycle of your data from source to productionized model. You have full control over your data and their plugin system lets you integrate with all of your other data tools, including data warehouses and SaaS platforms.

Data Engineering Podcast

JULY 27, 2020

Summary A majority of the scalable data processing platforms that we rely on are built as distributed systems. Kyle Kingsbury created the Jepsen framework for testing the guarantees of distributed data processing systems and identifying when and why they break.

Cloudera

SEPTEMBER 11, 2018

The open data processing pipeline. IoT is expected to generate a volume and variety of data greatly exceeding what is being experienced today, requiring modernization of information infrastructure to realize value. Telemetry data routed to the Cloudera Enterprise Data Hub flows into Apache Kafka.

Databand.ai

JULY 19, 2023

Complete Guide to Data Ingestion: Types, Process, and Best Practices Helen Soloveichik July 19, 2023 What Is Data Ingestion? Data Ingestion is the process of obtaining, importing, and processing data for later use or storage in a database. In this article: Why Is Data Ingestion Important?

Booking.com Engineering

DECEMBER 2, 2022

From a technical perspective, solving this requires machine learning and operational infrastructure at scale, which is processing performance feedback, assessing historical performance and after running algorithms, communicating results back to a search engine provider. PPC as a business represents a global optimization problem.

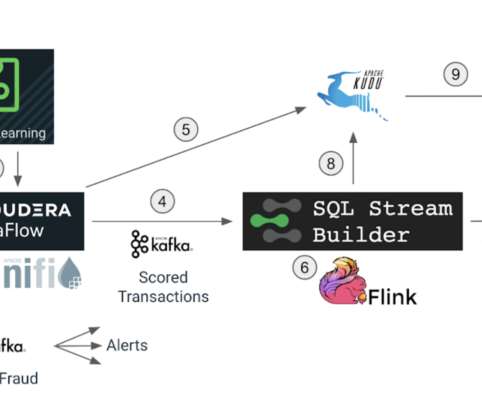

Cloudera

JULY 18, 2022

In this blog we will conclude the implementation of our fraud detection use case and understand how Cloudera Stream Processing makes it simple to create real-time stream processing pipelines that can achieve neck-breaking performance at scale. Data decays! It has a shelf life and as time passes its value decreases. Apache Flink.

Knowledge Hut

APRIL 25, 2024

If you pursue the MSc big data technologies course, you will be able to specialize in topics such as Big Data Analytics, Business Analytics, Machine Learning, Hadoop and Spark technologies, Cloud Systems etc. Look for a suitable big data technologies company online to launch your career in the field.

Analytics Vidhya

FEBRUARY 7, 2023

Introduction Data engineering is the field of study that deals with the design, construction, deployment, and maintenance of data processing systems. This includes designing and implementing […] The post Most Essential 2023 Interview Questions on Data Engineering appeared first on Analytics Vidhya.

Precisely

JULY 20, 2023

In a disruptive market, agility and speed are key to success and a competitive edge – and automating your critical SAP ® processes helps unlock those capabilities. When you set out to improve data quality and integrity, it’s critical to keep in mind the interdependence of process and data.

Ripple Engineering

MARCH 2, 2021

How do you make a computer system maximally secure and reliable? Disconnect it from all networks and never change any of the software or data. How do you make a computer system maximally useful? Connect it to networks and make frequent changes to the software and data! What is SOC 2? Why does Ripple want to pass SOC 2?

Knowledge Hut

MAY 2, 2024

Apache Spark is a fast and general-purpose cluster computing system. It also supports a rich set of higher-level tools, including Spark SQL for SQL and structured data processing, MLlib for machine learning, GraphX for graph processing, and Spark Streaming. If you don’t have java installed on your system.

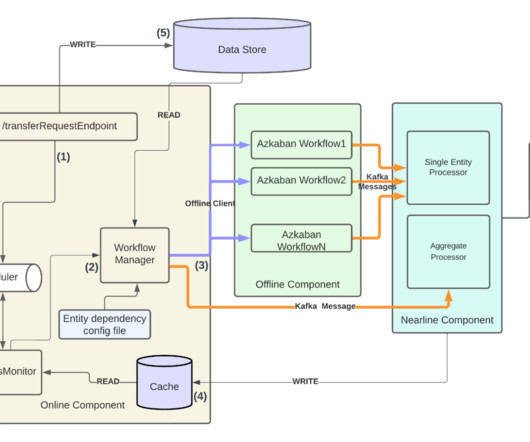

LinkedIn Engineering

JANUARY 19, 2024

This multi-entity handover process involves huge amounts of data updating and cloning. Data consistency, feature reliability, processing scalability, and end-to-end observability are key drivers to ensuring business as usual (zero disruptions) and a cohesive customer experience.

Data Engineering Weekly

MARCH 8, 2023

Pathway is a Python framework for realtime data stream processing that handles updates for you. You can set up your processing pipeline, and Pathway will ingest the new streaming data points for you, sending you alerts in realtime. This portion of the data is called a window.

Pinterest Engineering

SEPTEMBER 12, 2023

Behind the scenes, hundreds of ML engineers iteratively improve a wide range of recommendation engines that power Pinterest, processing petabytes of data and training thousands of models using hundreds of GPUs. In some cases, petabytes of data are streamed into training jobs to train a model.

Confluent

FEBRUARY 6, 2024

Confluent enables real-time, reliable, scalable, and secure communication between IoT devices, applications, and backend systems. Streamline data processing and unlock analytics to boost productivity and time to market while lowering infrastructure costs.

Precisely

FEBRUARY 29, 2024

Complexity is at the core of SAP automation challenges The core challenges to automating SAP processes essentially boil down to complexity. The business processes themselves are complex, as are the data objects associated with each SAP record. Let’s start with the complexity of the business processes.

Striim

APRIL 22, 2024

Challenges The primary obstacle for Discovery Health was the sheer scale of data across disparate systems and technologies. This complexity led to significant delays in data processing, impacting their ability to make timely decisions and adversely affecting the customer experience. Sign up for a free trial today!

Netflix Tech

NOVEMBER 14, 2023

In this context, managing the data, especially when it arrives late, can present a substantial challenge! In this three-part blog post series, we introduce you to Psyberg , our incremental data processing framework designed to tackle such challenges! What is late-arriving data? How does late-arriving data impact us?

Precisely

APRIL 30, 2024

RPA is best suited for simple tasks involving consistent data. It’s challenged by complex data processes and dynamic environments Complete automation platforms are the best solutions for complex data processes. Integration issues: Complex processes often involve interacting with multiple systems and applications.

Data Engineering Podcast

JANUARY 7, 2024

Summary Data processing technologies have dramatically improved in their sophistication and raw throughput. Unfortunately, the volumes of data that are being generated continue to double, requiring further advancements in the platform capabilities to keep up. What do you have planned for the future of your academic research?

Knowledge Hut

APRIL 23, 2024

For example, in 1880, the US Census Bureau needed to handle the 1880 Census data. They realized that compiling this data and converting it into information would take over 10 years without an efficient system. Thus, it is no wonder that the origin of big data is a topic many big data professionals like to explore.

Snowflake

APRIL 8, 2024

BigGeo BigGeo accelerates geospatial data processing by optimizing performance and eliminating challenges typically associated with big data. Scientific Financial Systems Beating the market is the driving force for investment management firms — but beating the market is not easy.

Striim

MAY 1, 2024

UPS Capital provides customs brokerage services to navigate import/export processes, supply chain optimization tools like supply chain analytics and inventory management, and technology solutions like the UPS Capital Merchant Services platform and UPS Capital Cargo Finance platform.

Knowledge Hut

MARCH 7, 2024

The year 2024 saw some enthralling changes in volume and variety of data across businesses worldwide. The surge in data generation is only going to continue. Foresighted enterprises are the ones who will be able to leverage this data for maximum profitability through data processing and handling techniques.

Knowledge Hut

APRIL 23, 2024

With the advent of technology and the arrival of modern communications systems, computer science professionals worldwide realized big data size and value. As big data evolves and unravels more technology secrets, it might help users achieve ambitious targets. Top 10 Disadvantages of Big Data 1.

Data Engineering Weekly

MAY 5, 2024

[link] Uber: From Predictive to Generative – How Michelangelo Accelerates Uber’s AI Journey Constantly adopting and implementing tech advancement with an existing system indicates efficient engineering. Hallucinations and the system's lack of explainability are the primary reasons for mistrust in Gen AI.

Precisely

APRIL 11, 2024

This initiative is a testament to how partnerships, innovation, and a commitment to excellence can redefine the landscape of cloud computing for legacy systems. This rigorous testing process not only tested our resolve but also provided a unique opportunity to enhance our product.

Data Engineering Weekly

MAY 16, 2023

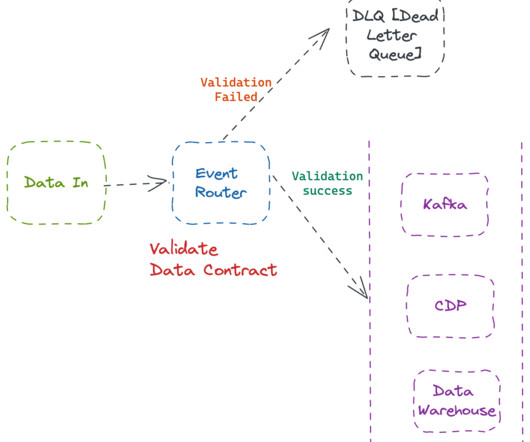

In the first part of this series, we talked about design patterns for data creation and the pros & cons of each system from the data contract perspective. In the second part, we will focus on architectural patterns to implement data quality from a data contract perspective. Why is Data Quality Expensive?

Knowledge Hut

APRIL 29, 2024

The stakes are high in the banking and financial industry since substantial financial sums are at risk and the potential for significant economic upheaval if banks and other financial systems are compromised. One of the officials fell for the phishing email and clicked on a dubious link, which allowed the malware to hack the system.

Knowledge Hut

APRIL 23, 2024

Big Data vs Small Data: Volume Big Data refers to large volumes of data, typically in the order of terabytes or petabytes. It involves processing and analyzing massive datasets that cannot be managed with traditional data processing techniques. Small Data is collected and processed at a slower pace.

Netflix Tech

DECEMBER 14, 2023

Engineers from across the company came together to share best practices on everything from Data Processing Patterns to Building Reliable Data Pipelines. The result was a series of talks which we are now sharing with the rest of the Data Engineering community!

Knowledge Hut

JANUARY 18, 2024

“Big data Analytics” is a phrase that was coined to refer to amounts of datasets that are so large traditional data processing software simply can’t manage them. For example, big data is used to pick out trends in economics, and those trends and patterns are used to predict what will happen in the future.

Snowflake

MARCH 14, 2024

They applied solutions like SAP BusinessObjects Data Services, Fivetran and Qlik, or used extractors to get SAP data into SAP BW and then attached more tools to get the data from SAP BW into other systems. Those trade-offs became less acceptable as demand for near real-time data and analytics increased.

Towards Data Science

NOVEMBER 16, 2023

Data Management A tutorial on how to use VDK to perform batch data processing Photo by Mika Baumeister on Unsplash Versatile Data Ki t (VDK) is an open-source data ingestion and processing framework designed to simplify data management complexities.

Expert insights. Personalized for you.

We have resent the email to

Are you sure you want to cancel your subscriptions?

Let's personalize your content