Introducing the SQL AI Assistant:Create, Edit, Explain, Optimize, and Fix Any Query

Cloudera

DECEMBER 21, 2023

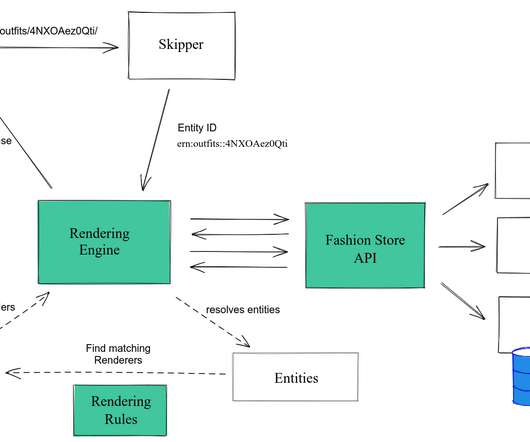

In the “assumptions” field, we see how the SQL AI Assistant looked over our data model; compared to what we’re looking for, it was able to find the right tables, columns, and joins needed to provide a query that will give us the list we’re looking for. And as a bonus, we even get the query written for us, saving us even more time!

Let's personalize your content