Top 10 Hadoop Tools to Learn in Big Data Career 2024

Knowledge Hut

DECEMBER 21, 2023

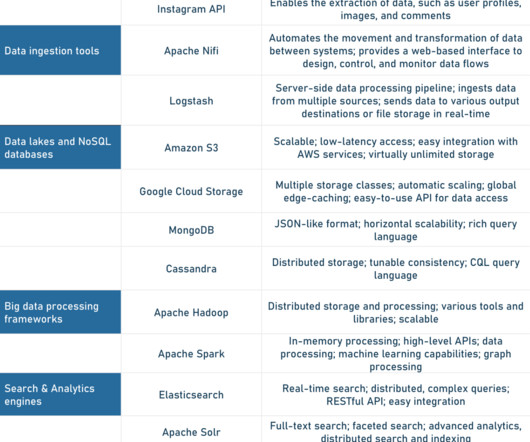

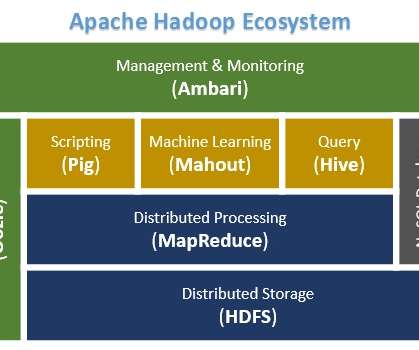

To establish a career in big data, you need to be knowledgeable about some concepts, Hadoop being one of them. Hadoop tools are frameworks that help to process massive amounts of data and perform computation. What is Hadoop? Hadoop is an open-source framework that is written in Java.

Let's personalize your content