Introducing Apache Kafka 3.6

Confluent

OCTOBER 11, 2023

Apache Kafka 3.6 brings Tiered Storage Early Access, migrating clusters from ZooKeeper to KRaft with no downtime, a grace period for stream-table joins, and more!

This site uses cookies to improve your experience. By viewing our content, you are accepting the use of cookies. To help us insure we adhere to various privacy regulations, please select your country/region of residence. If you do not select a country we will assume you are from the United States. View our privacy policy and terms of use.

Confluent

OCTOBER 11, 2023

Apache Kafka 3.6 brings Tiered Storage Early Access, migrating clusters from ZooKeeper to KRaft with no downtime, a grace period for stream-table joins, and more!

Confluent

FEBRUARY 7, 2023

Migrate Kafka clusters from ZooKeeper to KRaft with no downtime (early access), get improvements for Kafka Streams and Kafka Connect, and more.

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

How to Optimize the Developer Experience for Monumental Impact

Generative AI Deep Dive: Advancing from Proof of Concept to Production

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

Leading the Development of Profitable and Sustainable Products

Cloudera

OCTOBER 4, 2022

This blog post will provide guidance to administrators currently using or interested in using Kafka nodes to maintain cluster changes as they scale up or down to balance performance and cloud costs in production deployments. Kafka brokers contained within host groups enable the administrators to more easily add and remove nodes.

How to Optimize the Developer Experience for Monumental Impact

Generative AI Deep Dive: Advancing from Proof of Concept to Production

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

Leading the Development of Profitable and Sustainable Products

Cloudera

MARCH 22, 2021

It is the most secure deployment option, but this prevents direct access to their resources from the public internet and makes it difficult for their users to access the UIs and APIs in SDX and DataHub clusters. Today, Cloudera has launched the CDP Endpoint Access Gateway. CDP Endpoint Access Gateway.

Rockset

AUGUST 16, 2022

In either case, both Amazon Kinesis and Apache Kafka can help but which one is the right fit for you and your goals. Real quick disclaimer, I currently work at Rockset but previously worked at Confluent, a company known for building Kafka based platforms and cloud services. Let’s find out!

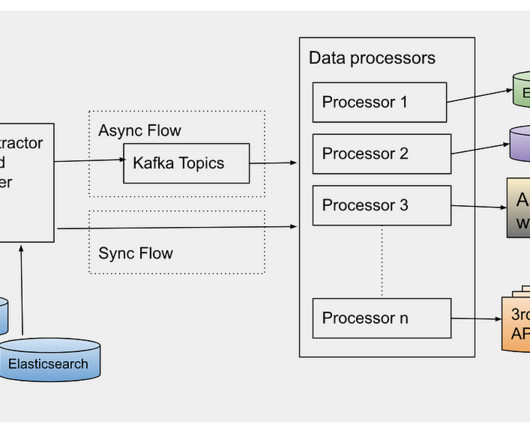

Afterpay Tech

SEPTEMBER 6, 2022

Photo by Leon S on Unsplash By: Jing Li Summary This article articulates the challenges, innovation and success of the Kafka implementation in Afterpay’s Global Payments Platform in the PCI zone. Context The asynchronous processing capability that Kafka offers opens up numerous innovation opportunities to interact with other services.

Cloudera

SEPTEMBER 15, 2020

introduces fine-grained authorization for access to Azure Data Lake Storage using Apache Ranger policies. Cloudera and Microsoft have been working together closely on this integration, which greatly simplifies the security administration of access to ADLS-Gen2 cloud storage. Use case #1: authorize users to access their home directory.

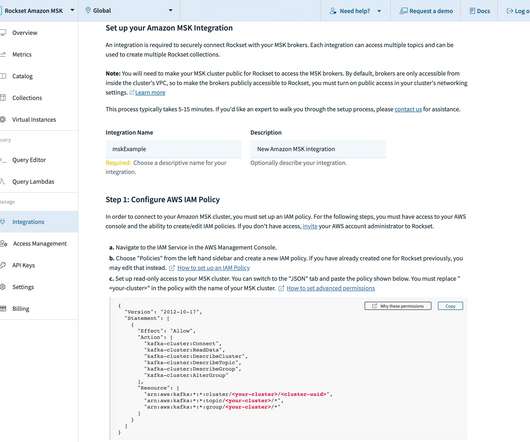

Rockset

DECEMBER 14, 2022

Rockset’s native connector for Amazon Managed Streaming for Apache Kafka (MSK) makes it simpler and faster to ingest streaming data for real-time analytics. Amazon MSK is a fully managed AWS service that gives users the ability to build and run applications using Apache Kafka.

Data Engineering Podcast

JULY 1, 2019

Scaling the volume of events that can be processed in real-time can be challenging, so Paul Brebner from Instaclustr set out to see how far he could push Kafka and Cassandra for this use case. By integrating each silo independently – data is able to integrate without any direct relation. At CluedIn they call it “eventual connectivity”.

Rockset

OCTOBER 21, 2022

When building a real-time customer 360 app, you’ll definitely need event data from a streaming data source, like Kafka. We’ll be building a basic version of this using Kafka, S3, Rockset, and Retool. We’ll integrate with Kafka and S3 through Rockset’s data connectors. user_purchases_v1 These are purchases made by the customer.

Rockset

SEPTEMBER 14, 2021

We’re introducing a new Rockset Integration for Apache Kafka that offers native support for Confluent Cloud and Apache Kafka, making it simpler and faster to ingest streaming data for real-time analytics. With the Kafka Integration, users no longer need to build, deploy or operate any infrastructure component on the Kafka side.

Rockset

APRIL 26, 2022

As Kafka Summit is in full swing in London this week and the topic of event streaming is all over my Linkedin feed, I saw a post asking " Is streaming dead? That streaming is rocking and with Kafka Summit this week, I thought it a good time to emphasize the importance of streaming data in today’s modern real-time data stack.

Confluent

MAY 13, 2019

In the last year, we’ve experienced enormous growth on Confluent Cloud, our fully managed Apache Kafka ® service. As Confluent Cloud has grown, we’ve noticed two gaps that very clearly remain to be filled in managed Apache Kafka services. Five seconds to Kafka (or, never make another cluster again!).

Rockset

JULY 28, 2022

Options For Change Data Capture on MongoDB Apache Kafka The native CDC architecture for capturing change events in MongoDB uses Apache Kafka. MongoDB provides Kafka source and sink connectors that can be used to write the change events to a Kafka topic and then output those changes to another system such as a database or data lake.

Cloudera

DECEMBER 10, 2020

In the previous post, we talked about Kerberos authentication and explained how to configure a Kafka client to authenticate using Kerberos credentials. In this post we will look into how to configure a Kafka client to authenticate using LDAP, instead of Kerberos. We use the Kafka-console-consumer for all the examples below.

Confluent

APRIL 9, 2019

I’m excited to announce that we’re partnering with Google Cloud to make Confluent Cloud, our fully managed offering of Apache Kafka ® , available as a native offering on Google Cloud Platform (GCP). Confluent’s founders didn’t just write the original code of Apache Kafka, we also ran it as a service at massive scale.

Precisely

DECEMBER 28, 2023

Enterprise technology is having a watershed moment; no longer do we access information once a week, or even once a day. One very popular platform is Apache Kafka , a powerful open-source tool used by thousands of companies. But in all likelihood, Kafka doesn’t natively connect with the applications that contain your data.

Data Engineering Podcast

DECEMBER 24, 2023

Summary Kafka has become a ubiquitous technology, offering a simple method for coordinating events and data across different systems. Can you describe your experiences with Kafka? What are the operational challenges that you have had to overcome while working with Kafka? When is Kafka the wrong choice?

Confluent

AUGUST 29, 2019

We know that Apache Kafka ® is great when you’re dealing with streams, allowing you to conveniently look at streams as tables. In an identity/access management application, it’s the relationships between roles and their privileges that matters most. The approach we’ll use works with any Kafka run though. 8, and so on.

Netflix Tech

MARCH 10, 2023

Studio applications use this service to store their media assets, which then goes through an asset cycle of schema validation, versioning, access control, sharing, triggering configured workflows like inspection, proxy generation etc. This pattern grows over time when we need to access and update the existing assets metadata.

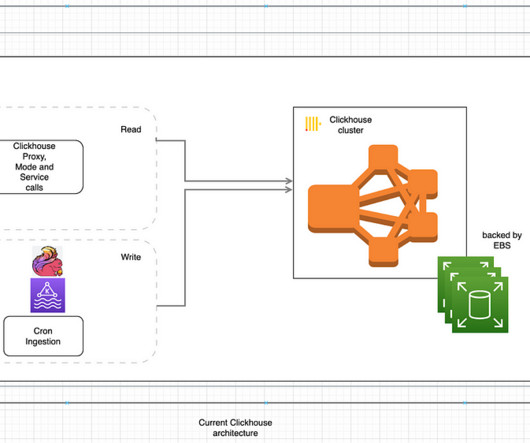

Lyft Engineering

NOVEMBER 29, 2023

Real-time Ingestion Events from our real-time analytics pipeline were configured to be sent into our internal Flink application, streamed to Kafka, and written into Druid. ioConfig: Kafka server info, topic names, etc. (ex. Kafka → ClickHouse: this is primarily used by our services which rely on a pub-sub model.

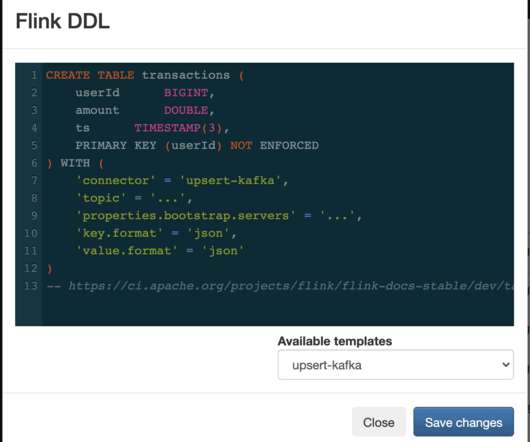

Cloudera

JUNE 7, 2021

It enabled users to easily write, run and manage real-time SQL queries on streams from Apache Kafka with an exceptionally smooth user experience. . Improved Kafka and Schema Registry integration. Flink SQL catalogs are now supported directly on the streambuilder platform allowing easy access to data stored in other systems.

Cloudera

FEBRUARY 21, 2023

This transformation can be performed on incoming records of a Kafka topic before SSB sees the data. If the Kafka topic has CSV data that we want to add keys and types to it. If the schema you want does not match the incoming Kafka topic. data transformations can be defined using the Kafka Table Wizard.

Cloudera

JULY 18, 2022

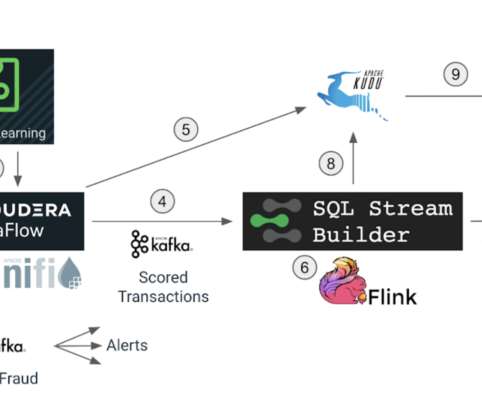

This information will be efficiently fed to downstream systems through Kafka, so that appropriate actions, like blocking the card or calling the user, can be initiated immediately. The scored transactions are written to the Kafka topic that will feed the real-time analytics process that runs on Apache Flink. Apache Flink.

Data Engineering Weekly

DECEMBER 3, 2023

link] Sophie Blee-Goldman: Kafka Streams and Rebalancing through the Ages Consumers come and go. Kafka rebalancing has come a long way since then, and the author walks back to us the memory lane of Kafka rebalancing and the advancements made ever since. Partitions, ever-present. Rebalancing, the awkward middle child.

Cloudera

JANUARY 31, 2024

Streams Replication Manager (SRM) is an enterprise-grade replication solution that enables fault tolerant, scalable, and robust cross-cluster Kafka topic replication. Introduction Kafka as an event streaming component can be applied to a wide variety of use cases. Replication can be dynamically enabled for topics and consumer groups.

Cloudera

MAY 1, 2023

In case of SSB projects, you might want to define Data Sources (such as Kafka providers or Catalogs ), Virtual tables , User Defined Functions (UDFs) , and write various Flink SQL jobs that use these resources. Resources that the user has access to can be found under “External Resources”. brokers, trust store) Catalog properties (e.g.

Cloudera

AUGUST 10, 2021

Impala Row Filtering to set access policies for rows when reading from a table. Atlas / Kafka integration provides metadata collection for Kafa producers/consumers so that consumers can manage, govern, and monitor Kafka metadata and metadata lineage in the Atlas UI. Figure 1: sales group SELECT access.

Zalando Engineering

NOVEMBER 29, 2017

See Ranking Websites in Real-time with Apache Kafka’s Streams API for the first post in the series. Running Kafka Streams applications in AWS At Zalando, Europe’s leading online fashion platform, we use Apache Kafka for a wide variety of use cases. Our team at Zalando was an early adopter of the Kafka Streams API.

Confluent

MARCH 15, 2022

Access to Apache Kafka® record headers will enable a whole host of new […]. We are excited to announce ksqlDB 0.24! It comes with a slew of improvements and new features.

Data Engineering Weekly

SEPTEMBER 24, 2023

Request Access to Data Validate with Exploration [link] Ramp: How Ramp Accelerated Machine Learning Development to Simplify Finance Ramp writes about its machine learning infrastructure and the choice of Metaflow for running the ML workload. Those challenges are a thing of the past with RudderStack’s Kafka source integration.

Cloudera

MARCH 2, 2023

It allows multiple data processing engines, such as Flink, NiFi, Spark, Hive, and Impala to access and analyze data in simple, familiar SQL tables. Recently, we announced enhanced multi-function analytics support in Cloudera Data Platform (CDP) with Apache Iceberg. Iceberg is a high-performance open table format for huge analytic data sets.

Data Engineering Podcast

SEPTEMBER 28, 2020

Summary Kafka has become a de facto standard interface for building decoupled systems and working with streaming data. To make the benefits of the Kafka ecosystem more accessible and reduce the operational burden, Alexander Gallego and his team at Vectorized created the Red Panda engine.

Rockset

MARCH 19, 2020

Intro In recent years, Kafka has become synonymous with “streaming,” and with features like Kafka Streams, KSQL, joins, and integrations into sinks like Elasticsearch and Druid, there are more ways than ever to build a real-time analytics application around streaming data in Kafka.

Cloudera

SEPTEMBER 28, 2020

The customer also wanted to utilize the new features in CDP PvC Base like Apache Ranger for dynamic policies, Apache Atlas for lineage, comprehensive Kafka streaming services and Hive 3 features that are not available in legacy CDH versions. Attribute-based access control and SparkSQL fine-grained access control. Cluster Type.

Monte Carlo

SEPTEMBER 8, 2023

If a platform application has incorrect access or is having a generated query that fails, these monitors help keep our data engineering team informed and proactive in helping users of the platform. Luckily the pipeline is well instrumented with start and end times of each stage saved to a central Kafka topic.

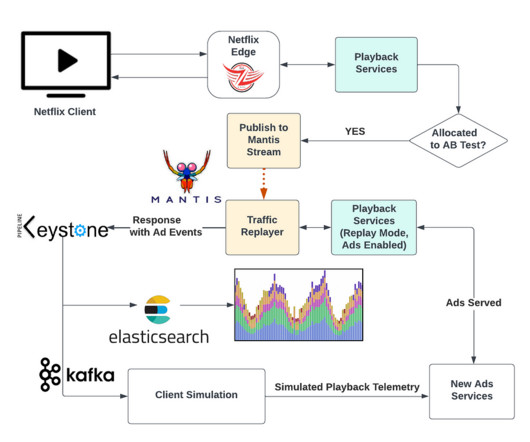

Netflix Tech

JUNE 1, 2023

We stored these responses in a Keystone stream with outputs for Kafka and Elasticsearch. A Kafka consumer retrieved the playback manifests with ad metadata and simulated a device playing the content and triggering the impression-tracking events. It also included metadata about ads, such as ad placement and impression-tracking events.

Knowledge Hut

JUNE 26, 2023

Top Data Engineering Projects with Source Code Data engineers make unprocessed data accessible and functional for other data professionals. Source Code: Stock and Twitter Data Extraction Using Python, Kafka, and Spark 2. If you are struggling with Data Engineering projects for beginners, then Data Engineer Bootcamp is for you.

Precisely

JULY 21, 2023

Used by more than 75% of the Fortune 500, Apache Kafka has emerged as a powerful open source data streaming platform to meet these challenges. But harnessing and integrating Kafka’s full potential into enterprise environments can be complex. This is where Confluent steps in.

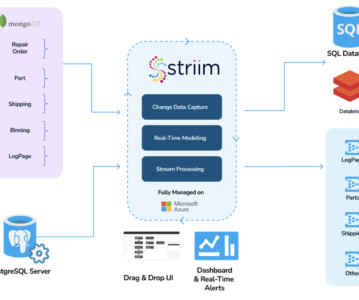

Striim

AUGUST 26, 2023

Users often have to grapple with intricate, low-level Kafka elements like topics, brokers, partitions, taking focus away from more strategic tasks. AWS MSK : An Apache Kafka-compatible managed streaming platform that also allows users to access other AWS services directly. Frequently Asked Questions What is Apache Kafka?

Christophe Blefari

JULY 3, 2023

There are so many sessions at both summits that this is impossible to watch everything, more Databricks and Snowflake do not put in free access online everything so I can't wait everything. With TS you can define insights and access to it, with Mode they gain a end-user application that people are already using.

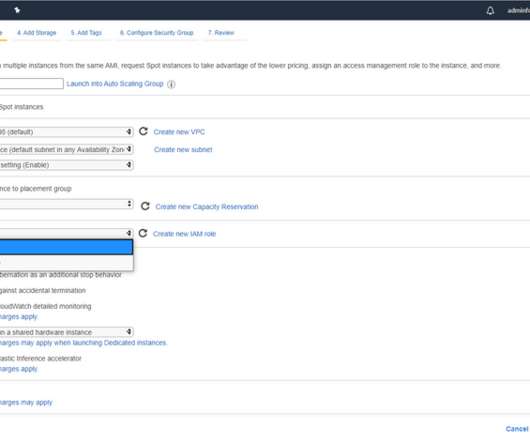

Team Data Science

JUNE 6, 2020

I should note that if you have created an AWS account, but have not yet created an Identity Access Management (IAM) admin role, and are therefore still using root credentials, I am strongly urging you now to set that up before moving forward. There are a few ways AWS will let you access an EC2 instance once it is launched.

Cloudera

MARCH 5, 2024

Struggling to access and collect, oftentimes disparate and siloed, data across environments that are required to power AI, many organizations are unable to achieve the business insight and value they had hoped for. Rolling upgrades are now supported for HDFS, Hive, HBase, Kudu, Kafka, Ranger, YARN, and Ranger KMS.

Snowflake

DECEMBER 5, 2023

To ensure data remains protected from unintended use, Snowflake Cortex (now in private preview) gives users access to industry-leading LLMs (e.g., In addition, Snowflake users can more quickly create custom models with imported data by accessing ready-to-use foundational models from Amazon Bedrock and Amazon SageMaker Jumpstart.

Expert insights. Personalized for you.

We have resent the email to

Are you sure you want to cancel your subscriptions?

Let's personalize your content