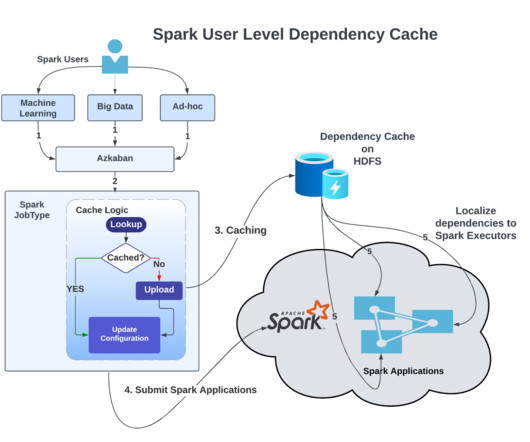

Reducing Apache Spark Application Dependencies Upload by 99%

LinkedIn Engineering

MARCH 9, 2023

We execute nearly 100,000 Spark applications daily in our Apache Hadoop YARN (more on how we scaled YARN clusters here ). Every day, we upload nearly 30 million dependencies to the Apache Hadoop Distributed File System (HDFS) to run Spark applications. Yarn Shared Cache is a common example of a cluster-level cache implementation.

Let's personalize your content