Data Lakes and SQL: A Match Made in Data Heaven

KDnuggets

JANUARY 16, 2023

In this article, we will discuss the benefits of using SQL with a data lake and how it can help organizations unlock the full potential of their data.

This site uses cookies to improve your experience. By viewing our content, you are accepting the use of cookies. To help us insure we adhere to various privacy regulations, please select your country/region of residence. If you do not select a country we will assume you are from the United States. View our privacy policy and terms of use.

KDnuggets

JUNE 27, 2022

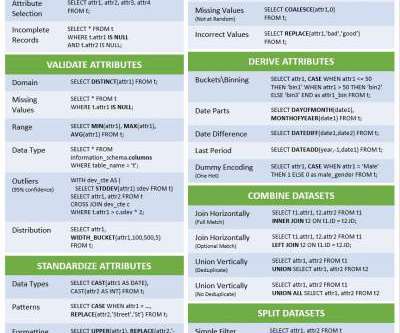

If your raw data is in a SQL-based data lake, why spend the time and money to export the data into a new platform for data prep?

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

How to Optimize the Developer Experience for Monumental Impact

Generative AI Deep Dive: Advancing from Proof of Concept to Production

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

Leading the Development of Profitable and Sustainable Products

Data Engineering Podcast

AUGUST 3, 2021

Summary Data lake architectures have largely been biased toward batch processing workflows due to the volume of data that they are designed for. With more real-time requirements and the increasing use of streaming data there has been a struggle to merge fast, incremental updates with large, historical analysis.

How to Optimize the Developer Experience for Monumental Impact

Generative AI Deep Dive: Advancing from Proof of Concept to Production

Understanding User Needs and Satisfying Them

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

Leading the Development of Profitable and Sustainable Products

phData: Data Engineering

SEPTEMBER 19, 2023

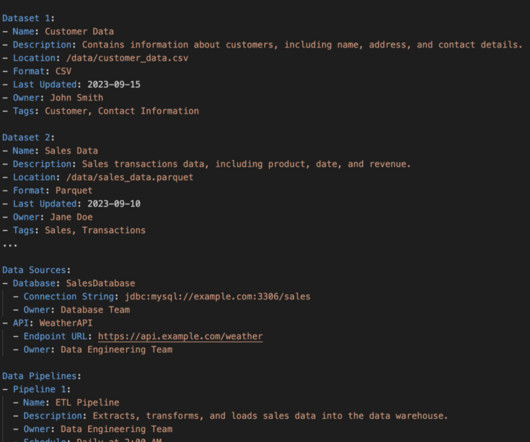

With the amount of data companies are using growing to unprecedented levels, organizations are grappling with the challenge of efficiently managing and deriving insights from these vast volumes of structured and unstructured data. What is a Data Lake? Consistency of data throughout the data lake.

Monte Carlo

APRIL 24, 2023

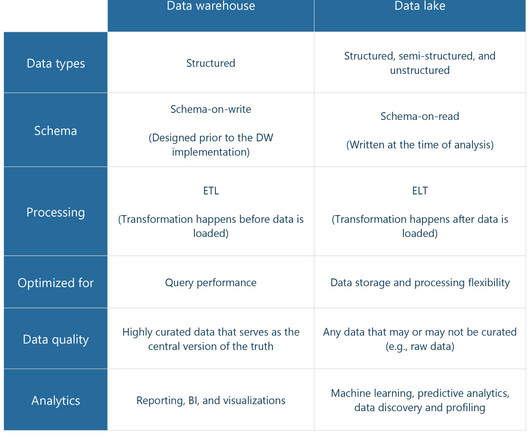

Data lakes are useful, flexible data storage repositories that enable many types of data to be stored in its rawest state. Traditionally, after being stored in a data lake, raw data was then often moved to various destinations like a data warehouse for further processing, analysis, and consumption.

DareData

JULY 5, 2023

Learn how we build data lake infrastructures and help organizations all around the world achieving their data goals. In today's data-driven world, organizations are faced with the challenge of managing and processing large volumes of data efficiently.

AltexSoft

AUGUST 29, 2023

In 2010, a transformative concept took root in the realm of data storage and analytics — a data lake. The term was coined by James Dixon , Back-End Java, Data, and Business Intelligence Engineer, and it started a new era in how organizations could store, manage, and analyze their data. What is a data lake?

Christophe Blefari

FEBRUARY 23, 2024

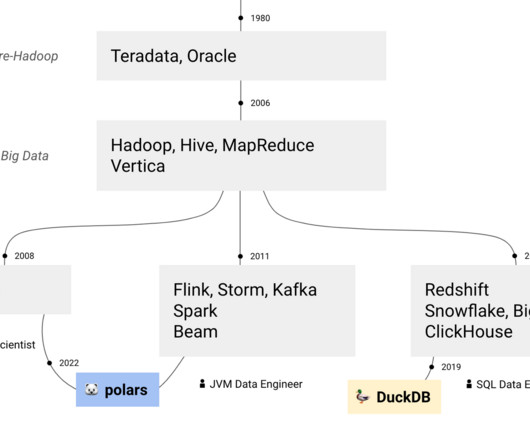

JVM vs. SQL data engineer — There's a big discussion in the community about what real data engineering is. Is it DataFrames or SQL? Is it lake or warehouse? It's a sterile debate: both are useful and can serve different organisations with different service level for data users and stakeholders.

Data Engineering Podcast

OCTOBER 28, 2021

He also explains why he started Decodable to address that limitation and the work that he and his team have done to let data engineers build streaming pipelines entirely in SQL. Start trusting your data with Monte Carlo today! The data you’re looking for is already in your data warehouse and BI tools.

Data Engineering Podcast

NOVEMBER 11, 2018

Summary A data lake can be a highly valuable resource, as long as it is well built and well managed. In this episode Yoni Iny, CTO of Upsolver, discusses the various components that are necessary for a successful data lake project, how the Upsolver platform is architected, and how modern data lakes can benefit your organization.

Hevo

MAY 17, 2024

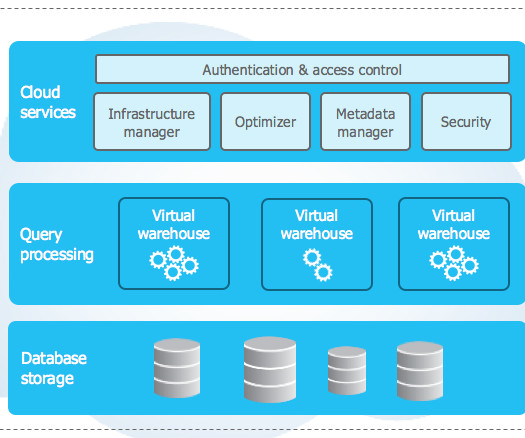

Snowflake Data Warehouse delivers essential infrastructure for handling a Data Lake, and Data Warehouse needs. It can store semi-structured and structured data in one place due to its multi-clusters architecture that allows users to independently query data using SQL.

KDnuggets

JANUARY 18, 2023

7 Best Platforms to Practice SQL • Explainable AI: 10 Python Libraries for Demystifying Your Model's Decisions • ChatGPT: Everything You Need to Know • Data Lakes and SQL: A Match Made in Data Heaven • Google Data Analytics Certification Review for 2023

Data Engineering Podcast

SEPTEMBER 7, 2020

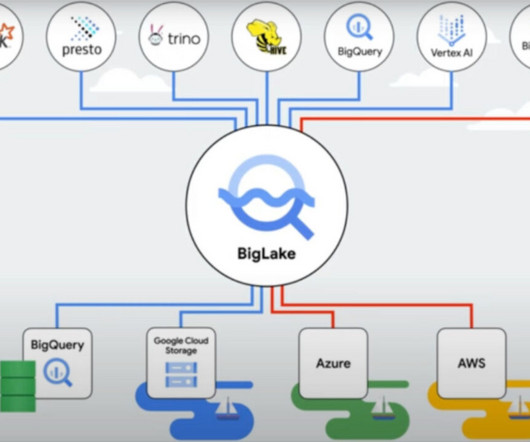

In this episode he explains how it is designed to allow for querying and combining data where it resides, the use cases that such an architecture unlocks, and the innovative ways that it is being employed at companies across the world. What are the tradeoffs of using Presto on top of a data lake vs a vertically integrated warehouse solution?

Christophe Blefari

FEBRUARY 2, 2024

Fivetran uses DuckDB as the tech to do file merge in the data lake offering Datacamp uses DuckDB to be able in notebooks to query dataframes in SQL and consider it for teaching SQL — I might have something in the making about this on my side. Lastly they pre-compute statistics on datasets with DuckDB.

Cloudera

NOVEMBER 10, 2020

Two of the more painful things in your everyday life as an analyst or SQL worker are not getting easy access to data when you need it, or not having easy to use, useful tools available to you that don’t get in your way! This simple statement captures the essence of almost 10 years of SQL development with modern data warehousing.

Hevo

APRIL 12, 2024

ETL processes often involve aggregating data from various sources into a data warehouse or data lake. Bucketing can be used during the transformation phase to aggregate data into predefined buckets or intervals. It plays a […]

Seattle Data Guy

FEBRUARY 10, 2024

Photo by Tiger Lily Data warehouses and data lakes play a crucial role for many businesses. It gives businesses access to the data from all of their various systems. As well as often integrating data so that end-users can answer business critical questions.

Data Engineering Podcast

MAY 22, 2022

Acryl Data provides DataHub as an easy to consume SaaS product which has been adopted by several companies. Signup for the SaaS product at dataengineeringpodcast.com/acryl RudderStack helps you build a customer data platform on your warehouse or data lake. Stop struggling to speed up your data lake.

Towards Data Science

MAY 3, 2023

One such tool is the Versatile Data Kit (VDK), which offers a comprehensive solution for controlling your data versioning needs. VDK helps you easily perform complex operations, such as data ingestion and processing from different sources, using SQL or Python. Use VDK to build a data lake and merge multiple sources.

Data Engineering Podcast

DECEMBER 4, 2022

Datafold built automated regression testing to help data and analytics engineers deal with data quality in their pull requests. Datafold shows how a change in SQL code affects your data, both on a statistical level and down to individual rows and values before it gets merged to production. Pricing for SQLake is simple.

Rockset

AUGUST 30, 2021

Apache Kafka has made acquiring real-time data more mainstream, but only a small sliver are turning batch analytics, run nightly, into real-time analytical dashboards with alerts and automatic anomaly detection. The majority are still draining streaming data into a data lake or a warehouse and are doing batch analytics.

Christophe Blefari

JANUARY 20, 2024

The Rise of the Data Engineer The Downfall of the Data Engineer Functional Data Engineering — a modern paradigm for batch data processing There is a global consensus stating that you need to master a programming language (Python or Java based) and SQL in order to be self-sufficient.

ProjectPro

FEBRUARY 16, 2023

At the heart of these data engineering skills lies SQL that helps data engineers manage and manipulate large amounts of data. Did you know SQL is the top skill listed in 73.4% of data engineer job postings on Indeed? Almost all major tech organizations use SQL. use SQL, compared to 61.7%

Cloudera

JUNE 18, 2022

Cloudera customers run some of the biggest data lakes on earth. These lakes power mission critical large scale data analytics, business intelligence (BI), and machine learning use cases, including enterprise data warehouses. On data warehouses and data lakes. Iterations of the lakehouse.

Data Engineering Podcast

DECEMBER 11, 2022

Datafold built automated regression testing to help data and analytics engineers deal with data quality in their pull requests. Datafold shows how a change in SQL code affects your data, both on a statistical level and down to individual rows and values before it gets merged to production. Pricing for SQLake is simple.

Knowledge Hut

MARCH 28, 2024

Implemented and managed data storage solutions using Azure services like Azure SQL Database , Azure Data Lake Storage, and Azure Cosmos DB. Education & Skills Required Proficiency in SQL, Python, or other programming languages. Develop predictive models and data-driven solutions to address business challenges.

Data Engineering Podcast

AUGUST 20, 2021

Summary Data lakes have been gaining popularity alongside an increase in their sophistication and usability. Despite improvements in performance and data architecture they still require significant knowledge and experience to deploy and manage. The data you’re looking for is already in your data warehouse and BI tools.

Data Engineering Podcast

OCTOBER 16, 2022

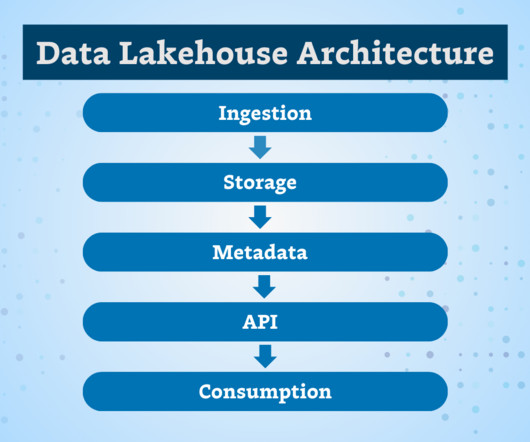

Summary The "data lakehouse" architecture balances the scalability and flexibility of data lakes with the ease of use and transaction support of data warehouses. Mention the podcast to get a free "In Data We Trust World Tour" t-shirt. How has that history influenced the capabilities (e.g.

Monte Carlo

JANUARY 5, 2024

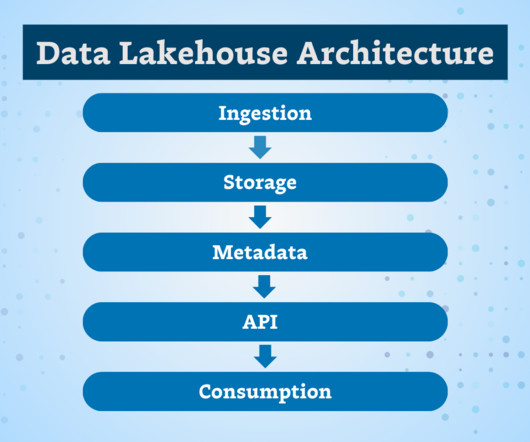

Data lakehouse architecture combines the benefits of data warehouses and data lakes, bringing together the structure and performance of a data warehouse with the flexibility of a data lake. The data lakehouse’s semantic layer also helps to simplify and open data access in an organization.

Monte Carlo

JANUARY 5, 2024

Data lakehouse architecture combines the benefits of data warehouses and data lakes, bringing together the structure and performance of a data warehouse with the flexibility of a data lake. The data lakehouse’s semantic layer also helps to simplify and open data access in an organization.

Ascend.io

AUGUST 31, 2023

Secondly , the rise of data lakes that catalyzed the transition from ELT to ELT and paved the way for niche paradigms such as Reverse ETL and Zero-ETL. Still, these methods have been overshadowed by EtLT — the predominant approach reshaping today’s data landscape.

Data Engineering Weekly

MAY 12, 2024

[link] Gradient Flow: Learning from the Past - Comparing the Hype Cycles of Big Data and GenAI The blogs compare the hype cycle of Big Data with Gen AI. The blog narrates the initial hype of Big Data, followed by talent shortages and lead time to build production applications.

Rockset

JULY 6, 2022

Typically stored in SQL statements, the schema also defines all the tables in the database and their relationship to each other. Companies carefully engineered their ETL data pipelines to align with their schemas (not vice-versa). SQL queries were easier to write. They also ran a lot faster. There were heavy tradeoffs, though.

Monte Carlo

DECEMBER 4, 2023

So, don’t forget to review your ChatGPT outputs before leverage scripts or pushing any SQL code to production. Extraction ChatGPT ETL prompts can be used to help write scripts to extract data from different sources, including: Databases I have a SQL database with a table named employees.

Data Engineering Podcast

JANUARY 28, 2024

Announcements Hello and welcome to the Data Engineering Podcast, the show about modern data management Data lakes are notoriously complex. And Starburst does all of this on an open architecture with first-class support for Apache Iceberg, Delta Lake and Hudi, so you always maintain ownership of your data.

Knowledge Hut

SEPTEMBER 25, 2023

Skill Requirements for Azure Data Engineer Job Description Here are some important skill requirements that you may find in a job description for Azure Data Engineers: 1. Azure Data Engineers work with these and other solutions. They guarantee that the data is efficiently cleaned, converted, and loaded.

Knowledge Hut

NOVEMBER 17, 2023

To provide end users with a variety of ready-made models, Azure Data engineers collaborate with Azure AI services built on top of Azure Cognitive Services APIs. Here is a step-by-step guide on how to become an Azure Data Engineer: 1. You should possess a strong understanding of data structures and algorithms.

Data Engineering Podcast

NOVEMBER 6, 2022

RudderStack helps you build a customer data platform on your warehouse or data lake. Instead of trapping data in a black box, they enable you to easily collect customer data from the entire stack and build an identity graph on your warehouse, giving you full visibility and control.

Data Engineering Podcast

JANUARY 13, 2019

Links TimescaleDB Original Appearance on the Data Engineering Podcast 1.0 Links TimescaleDB Original Appearance on the Data Engineering Podcast 1.0

Monte Carlo

DECEMBER 29, 2022

Data Observability: How to Build Your Own Data Anomaly Detectors Using SQL The same author, Monte Carlo data scientist Ryan Kearns, dives into how to build machine learning driven data anomaly detectors using SQL. In fact, our original prototype only covered about 80% of possible combinations!

Data Engineering Podcast

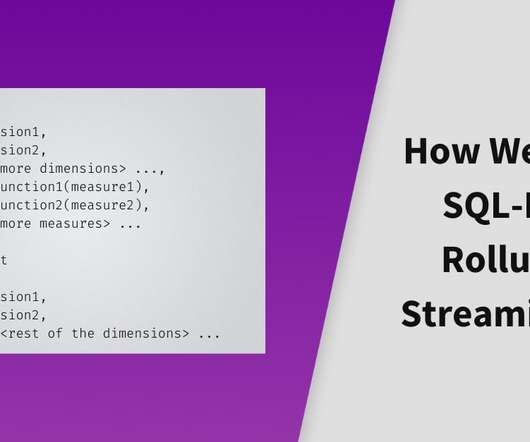

FEBRUARY 4, 2024

Summary Stream processing systems have long been built with a code-first design, adding SQL as a layer on top of the existing framework. In this episode Yingjun Wu explains how it is architected to power analytical workflows on continuous data flows, and the challenges of making it responsive and scalable. Starburst :

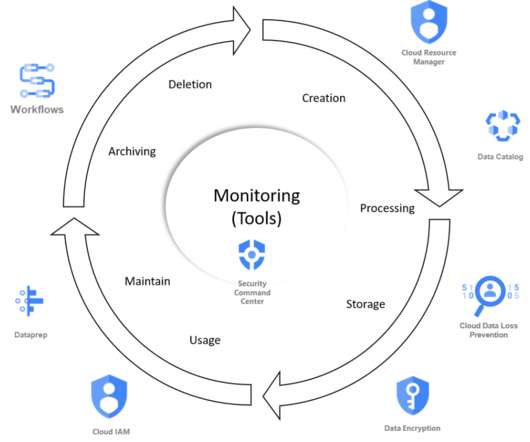

François Nguyen

MARCH 22, 2021

With this 3rd platform generation, you have more real time data analytics and a cost reduction because it is easier to manage this infrastructure in the cloud thanks to managed services. The data domain Discovery portal with all the metadata on the data life cycle 4.Federated The number of subjects to automatize is not short.

Knowledge Hut

NOVEMBER 2, 2023

Azure Data Ingestion Pipeline Create an Azure Data Factory data ingestion pipeline to extract data from a source (e.g., CSV, SQL Server), transform it, and load it into a target storage (e.g., Azure SQL Database, Azure Data Lake Storage). A strong understanding of data sourcing with SQL.

Snowflake

DECEMBER 4, 2023

With this public preview, those external catalog options are either “GLUE”, where Snowflake can retrieve table metadata snapshots from AWS Glue Data Catalog, or “OBJECT_STORE”, where Snowflake retrieves metadata snapshots directly from the specified cloud storage location. With these three options, which one should you use?

Expert insights. Personalized for you.

We have resent the email to

Are you sure you want to cancel your subscriptions?

Let's personalize your content