What is Data Extraction? Examples, Tools & Techniques

Knowledge Hut

JANUARY 30, 2024

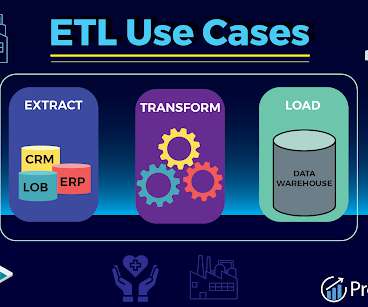

Whether it's aggregating customer interactions, analyzing historical sales trends, or processing real-time sensor data, data extraction initiates the process. What is the purpose of extracting data? The purpose of data extraction is to transform large, unwieldy datasets into a usable and actionable format.

Let's personalize your content