Data Integrity vs. Data Validity: Key Differences with a Zoo Analogy

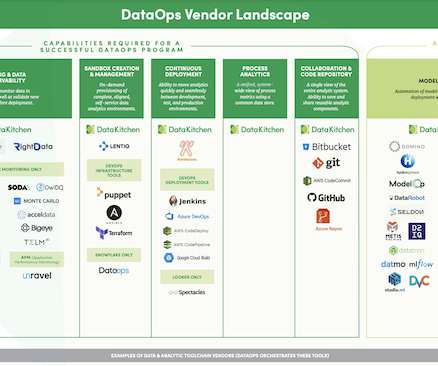

Monte Carlo

MARCH 24, 2023

The data doesn’t accurately represent the real heights of the animals, so it lacks validity. Let’s dive deeper into these two crucial concepts, both essential for maintaining high-quality data. Let’s dive deeper into these two crucial concepts, both essential for maintaining high-quality data. What Is Data Validity?

Let's personalize your content