Adding Anomaly Detection And Observability To Your dbt Projects Is Elementary

Data Engineering Podcast

MARCH 31, 2024

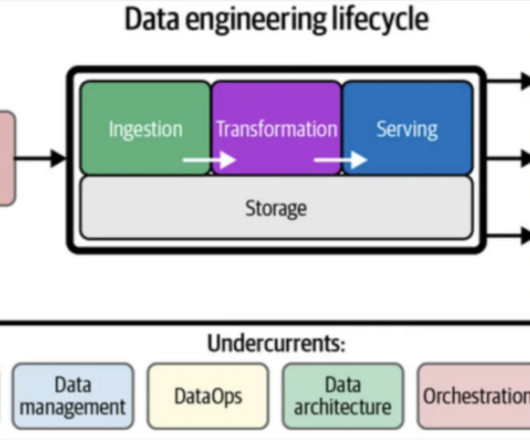

While there are numerous products available to provide that visibility, they all have different technologies and workflows that they focus on. To bring observability to dbt projects the team at Elementary embedded themselves into the workflow. How have the scope and goals of the project changed since you started working on it?

Let's personalize your content