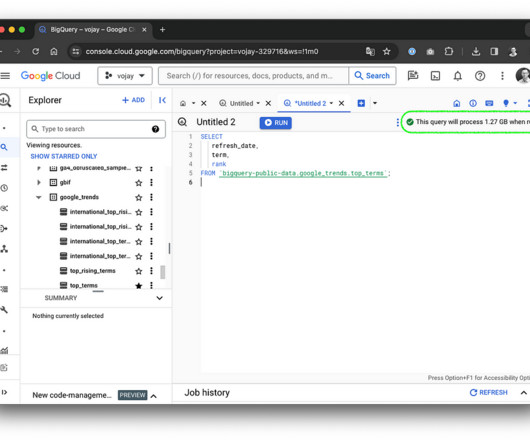

A Definitive Guide to Using BigQuery Efficiently

Towards Data Science

MARCH 5, 2024

Introduction In the field of data warehousing, there’s a universal truth: managing data can be costly. Like a dragon guarding its treasure, each byte stored and each query executed demands its share of gold coins. But let me give you a magical spell to appease the dragon: burn data, not money!

Let's personalize your content