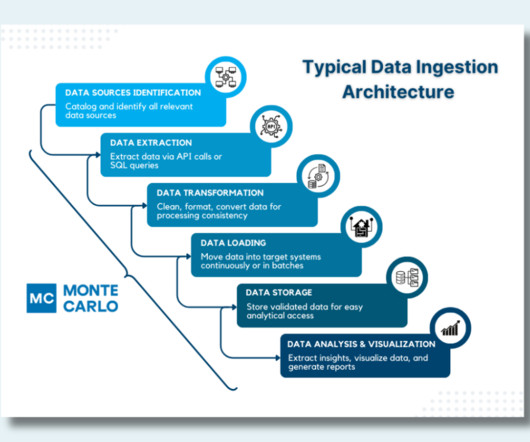

How to Design a Modern, Robust Data Ingestion Architecture

Monte Carlo

MAY 28, 2024

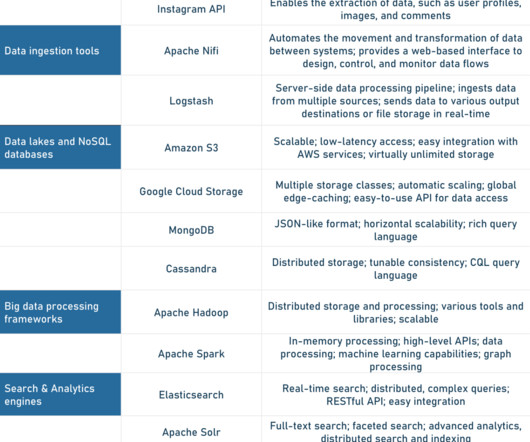

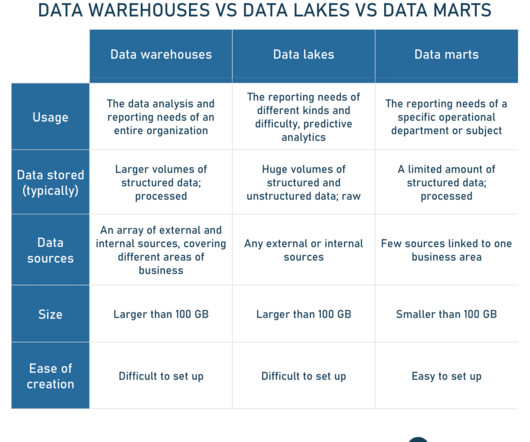

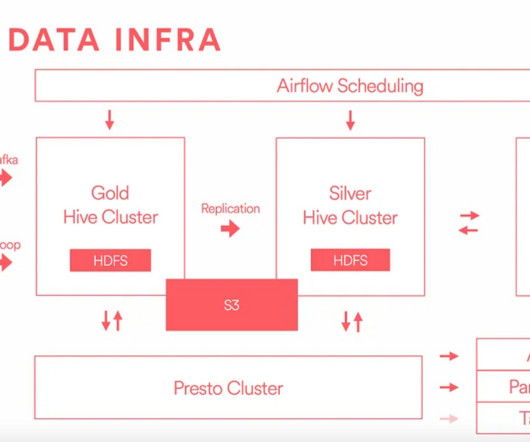

A data ingestion architecture is the technical blueprint that ensures that every pulse of your organization’s data ecosystem brings critical information to where it’s needed most. Data Transformation : Clean, format, and convert extracted data to ensure consistency and usability for both batch and real-time processing.

Let's personalize your content