What is Data Extraction? Examples, Tools & Techniques

Knowledge Hut

JANUARY 30, 2024

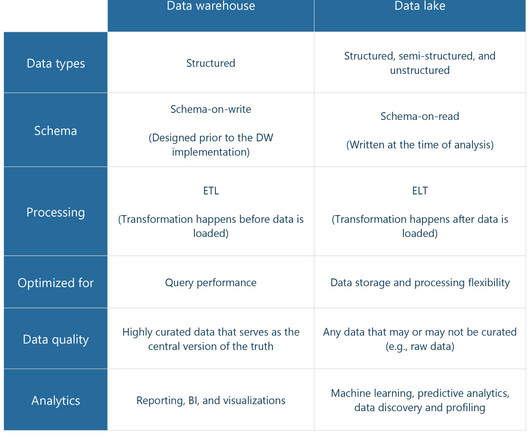

Goal To extract and transform data from its raw form into a structured format for analysis. To uncover hidden knowledge and meaningful patterns in data for decision-making. Data Source Typically starts with unprocessed or poorly structured data sources. Analyzing and deriving valuable insights from data.

Let's personalize your content