Data Pipeline- Definition, Architecture, Examples, and Use Cases

ProjectPro

DECEMBER 7, 2021

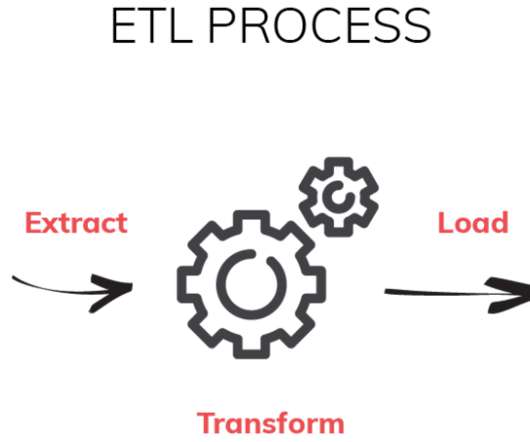

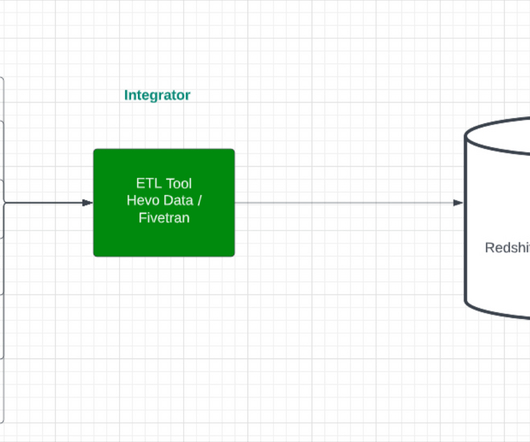

A pipeline may include filtering, normalizing, and data consolidation to provide desired data. In broader terms, two types of data -- structured and unstructured data -- flow through a data pipeline. Step 2- Internal Data transformation at LakeHouse.

Let's personalize your content